Cyanite’s proprietary tagging engine revolutionizes catalog management by shifting from subjective manual input to objective audio-only analysis. By processing tracks in parallel—averaging just 10 seconds per file—the system generates a stable foundation of multi-dimensional metadata, including genres, moods, energy levels, and instrumentation, ensuring consistency across enterprise-scale libraries.

This methodology effectively resolves the ‘memory-reliance’ trap where inconsistent human tagging leads to overlooked high-value tracks. How will your organization leverage standardized metadata to trigger more precise algorithmic recommendations across DSPs?

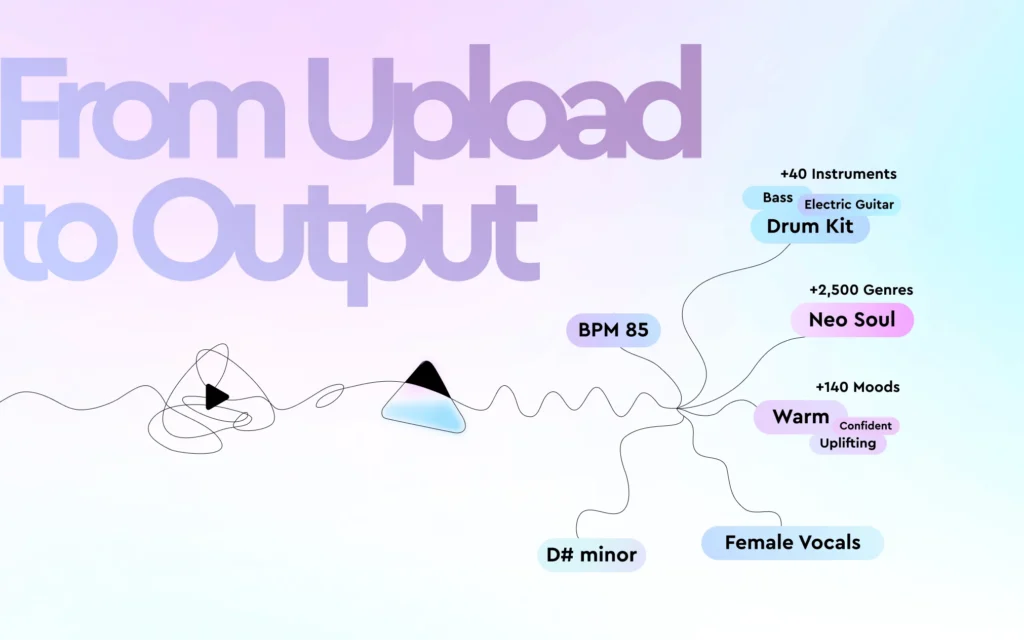

Curated by MusicResearch.com from Cyanite AI. View original technical breakdown: From Upload to Output: How Cyanite Turns Audio into Reliable Metadata at Scale